The advancement in Artificial Intelligence (AI) is drastic in one way or the other, and one of the most ground-breaking AI agent developments is the rise of the multimodal AI agent. These are intelligent systems that use text, images, audio, etc., to deliver human-like understanding and responses. It is a game-changing option that any business can opt for if its goal is to build more efficient, intuitive, and context-aware AI solutions.

Here in this blog, you will get to know what multimodal AI agents are, where they add real value, how multimodal AI agents work, and why they are the future of AI-powered experiences in detail.

No matter if you are a developer, a startup founder, an enterprise leader, or a tech enthusiast, this section is all you need to understand what multimodal AI is, the potential of these agents, and how to start building your own.

What is a Multimodal AI Agent?

Multimodal AI agents are intelligent systems designed to add a new level of perception, decision-making, and interaction with digital environments.

In simpler terms, a multi-modal AI agent is a shift from how AI traditionally operates. That is, traditional AI models often use a single type of data, like text or image, whereas a multimodal AI agent can process multiple data types at the same time. These inputs are collectively referred to as modalities, and they include:

- Text (Natural language)

- Images (Visual recognition)

- Audio (Speech & sound)

- Video (Motion & action)

- Sensor data (GPS, temperature)

Multimodal AI agents combine all these inputs and interpret context with greater accuracy and depth. Imagine, instead of processing just what a person says, a multi-modal agent can also read their facial expressions, detect tone of voice, and analyze the visual environment. This results in more intelligent and human-aware interactions.

For all these reasons, multimodal AI agents are becoming essential components in agentic AI development, where smart systems need to adapt, think, and make decisions on their own.

Why is Multimodal AI the Future of Agentic AI Development?

Agentic AI involves building smart systems that can think, make decisions, and take action on their own. Multimodal AI agents are a leap forward and enable AI systems to work with multi-input AI models such as text, images, and voice, thus allowing businesses to develop applications that interact with the real world just like humans.

Traditional single-modal agents fail to respond when the environment demands context beyond the trained input. In contrast, multimodal AI systems and multi-sensor AI agents offer better understanding, making them perfect for industries like healthcare, robotics, and autonomous vehicles.

How Multimodal AI Agents Work?

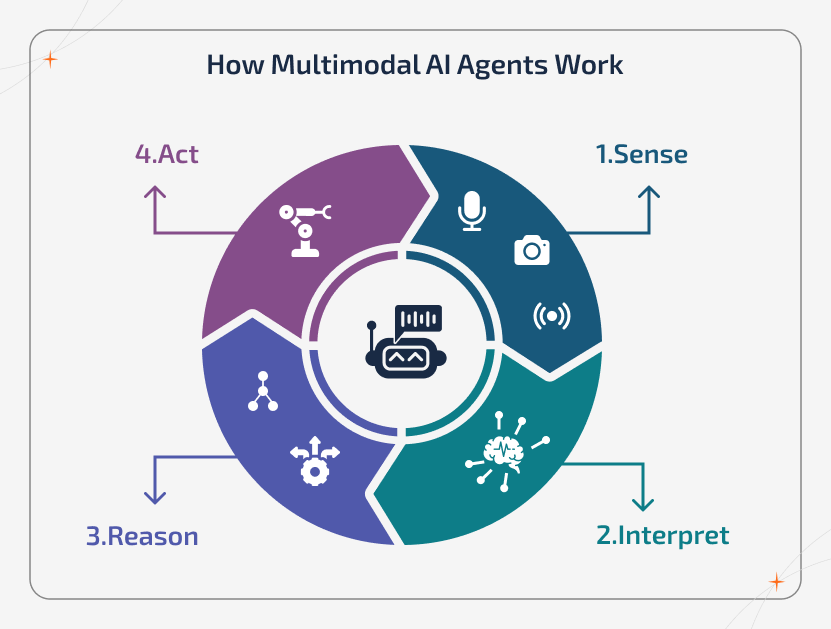

The working of a multimodal AI agent is not as complex as you think it is. It works by processing multiple types of inputs at the same time and turning them into a unified understanding, reasoning, and action. Instead of treating the text, image, audio, and sensor data as separate systems, they learns to interlink them into a single contextual view of the environment. This actually allows the system to understand why it’s happening and what should be done next.

At a functional level, the AI agent operates in a continuous intelligence loop where it senses what’s happening through different inputs. This allows it to adapt in real-time, operate on its own, and make decisions that feel natural across the digital and physical environments.

The Complete Architecture of Multimodal AI Agent

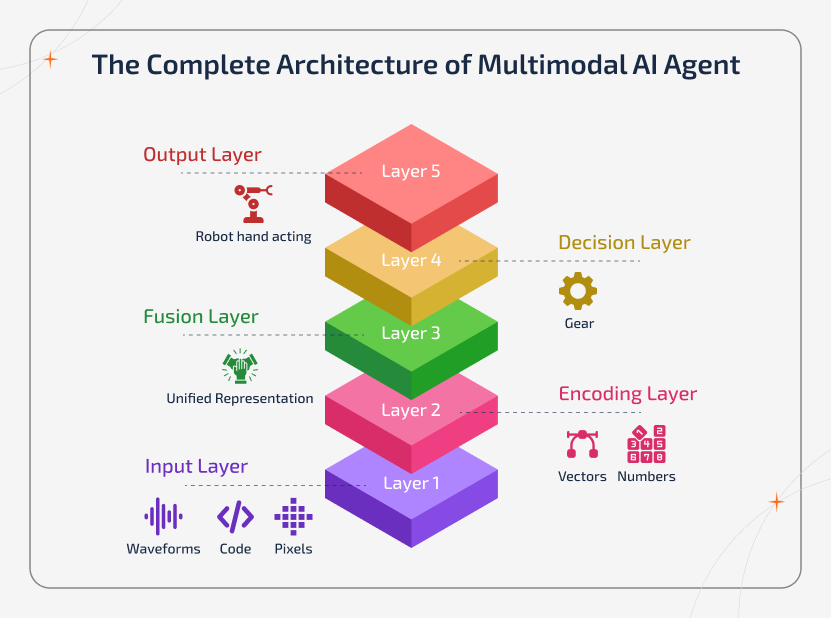

A multimodal AI agent is built on a layered architecture that transforms raw data into intelligent action. Each layer plays a unique role in transforming perception into reasoning and reasoning into execution.

Input Layer

In this layer, the agent collects data from multiple sources, including cameras, microphones, text inputs, video streams, and physical sensors. By acting as a perception layer of the system, it captures real-world signals from different modalities in real-time.

Encoding Layer

In this layer, the raw inputs are transformed into a structured representation, which is known as embeddings. The text, images, and audio are converted into machine-readable formats. This preserves meaning, context, and relationships across modalities.

Fusion Layer

The fusion layer blends embeddings from various modalities and put into a unified representation. Besides processing each input separately, the system learns shared meaning across them. This is the layer where the cross-modal understanding is formed, and the fragmented perception becomes contextual intelligence.

Decision Layer

This layer applies reasoning, logic, memory, and learning mechanisms. The mechanisms include reinforcement learning and policy models. It further evaluates the fused information, predicts outcomes, and decides what action the agent must take.

Output Layer

The output layer executes intelligence into the real-world impact. This includes triggering the workflow, generating a response, activating systems, or interacting with the users and environments. This is exactly where understanding becomes action.

Altogether, these layers form a continuous intelligence pipeline where the perception flows into understanding, reasoning, and execution.

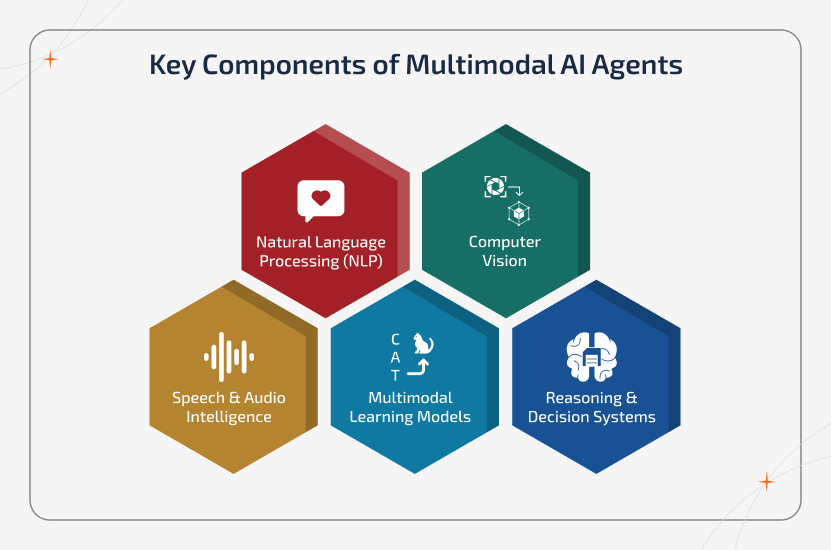

Key Components of Multimodal AI Agents

Multimodal AI agents are built with a few core technologies that work together to assist the system in understanding the environment and to take respective actions.

Natural Language Processing (NLP)

NLP is the primary component that helps the AI agent to understand and speak human language. It does figure out what people mean, not just what they say. It handles emotion, tone, and context so that the conversations feel so natural instead of robotic.

Computer Vision

Computer Vision is the reason for an AI agent to visualize the world. It understands images and videos, recognizes objects, reads the environment, and picks up the visual details that humans usually notice. This actually gives the agent real visual awareness.

Speech & Audio Intelligence

This segment helps the agent understand the tones, accents, voice, and sounds. It carefully listens to how things are said, not just the words themselves. Emotion, stress, and background sounds all help the agent to understand the situation better and respond more naturally.

Multimodal Learning Models

The multimodal learning models connect everything together. They help the AI agent truly understand how the text, images, and other inputs relate to each other. Instead of treating the data as separate pieces, the AI agent learns the full picture, just like us humans do.

Reasoning & Decision Systems

This is the thinking part, helping AI agents remember things, understand context, make choices, and decide what to do next. This is what turns understanding into action and make the agent feel intelligent.

Multimodal AI Agents vs Single-Modal AI - Know the Differences

The major difference between multimodal and single-modal AI agents is their scope of perception and reasoning.

Simply put, single-modal agents focus on one type of input and have limited flexibility. In contrast, multimodal agents in AI can evaluate multiple data types, such as speech tone, facial expressions, textual sentiments, etc.

simultaneously. Thus, they offer more accurate, context-rich decisions in healthcare, customer service, and finance. Here are the key differences between these two agents.

| Aspect | Single-Modal AI Agents | Multimodal AI Agents |

|---|---|---|

| Input Types | Single data type (text or image or audio) | Multiple data types (text + image + audio + sensors) |

| Understanding | Isolated interpretation | Contextual and cross-modal understanding |

| Decision Quality | Limited and reactive | Rich, adaptive, and situational |

| Flexibility | The adaptability is low to the latest scenarios | High adaptability across environment |

| Real-World Capability | Works in controlled settings | Works in complex and real-world conditions |

| Use Case Power | Task automation in AI workflow | Intelligence-driven autonomy |

To make it even simpler,

Single-model AI = Smart tools

Multimodal AI agents = Intelligent systems

One processes the data, and the other understands reality.

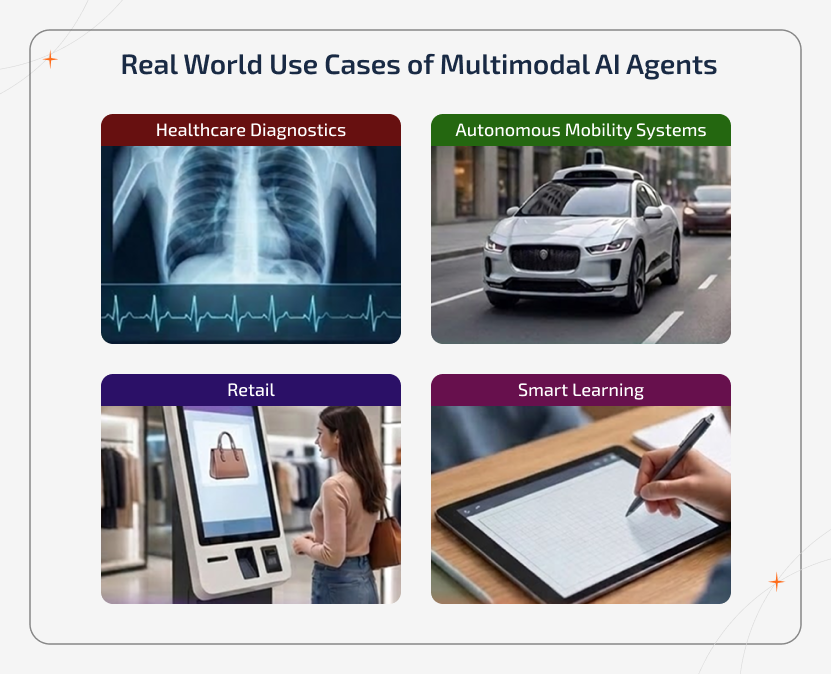

Real World Use Cases of Multimodal AI Agents

Often, multimodal AI applications showcase how combining different types of data brings smarter and more powerful results. Take a look at the real multimodal AI agents examples:

Healthcare Diagnostics

Alongside healthcare diagnostic agents, hospitals and clinics can combine patient speech, medical records, imaging scans, and vital data to support risk detection and decision-making. Also, by understanding the symptoms across the modalities, it will improve speed, accuracy, and treatment planning.

Autonomous Mobility Systems

By fusing the radar, video, LIDAR, GPS, and sensor inputs, the multimodal AI agents enable real-time perception, hazard detection, and adaptive driving behaviour in complex physical environments.

Retail

Build an intelligent retail AI agent that interprets facial expressions, voice tones, browsing behaviour, and purchase history to deliver a hyper-personalized recommendation and predictive analytics development in customer engagement.

Smart Learning

Educational AI agents use voice, gestures, handwriting, and written input to understand the learning pattern and adapt content delivery in real time for personalized and responsive education experiences.

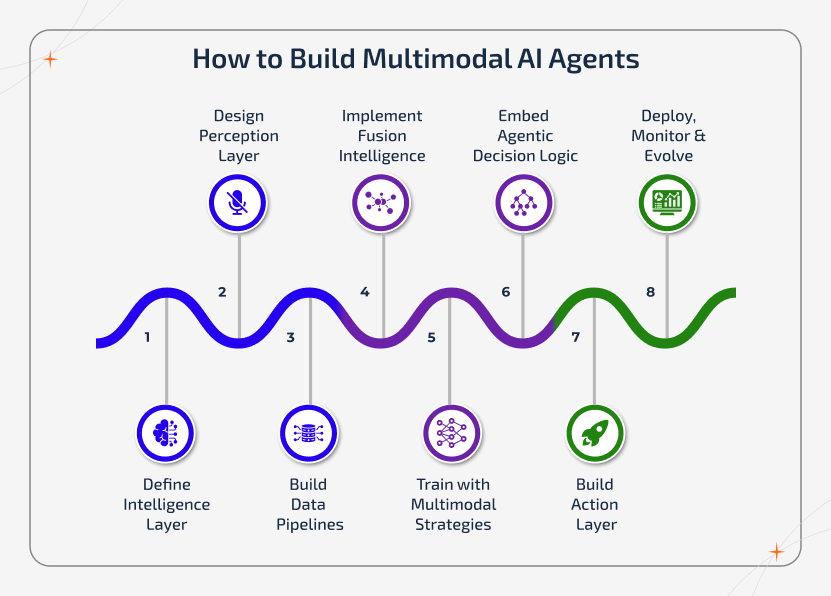

How to Build Multimodal AI Agents

Building AI agents with multimodal models involves combining software engineering with machine learning, data fusion, and agentic logic. The steps to get started in the construction of a multimodal AI agent are as follows:

Step 1: Define Intelligence Layer

Before you select any modality or technology, the initial step is to define what the AI agent really means to do.

While defining, ask:

- What decisions will the agent make autonomously?

- What environments will it operate in?

- What risks must it avoid?

- What actions will it trigger?

Beyond being just a model, it acts as a decision-making system, so that clarity at this stage determines the entire multimodal AI architecture. This step transforms the project from building AI into designing an interface.

Step 2: Design the Perception Layer

Once the purpose has been defined, get ready to choose the right modalities. Instead of just randomly adding text, vision, and audio, each modality must serve a purpose in perception and reasoning.

For example,

- Text (language understanding)

- Audio (speech, tone, and ambient sound)

- Vision (images, video, and gestures)

- Sensors (IoT, GPS, temperature, motion, and biometrics)

The ultimate goal is to create a perception system that mirrors how the human mind understands the world with multiple senses working together (ex: eyes + ears + memory + context + reasoning).

Step 3: Build Multimodal Data Pipelines

Usually, a multimodal AI system depends on properly aligned data to deliver an accurate output. This means the data must be paired and synchronized across modalities.

This includes:

- Image ↔ Caption pairing

- Video ↔ Audio synchronization

- Sensor ↔ Event labeling

- Instruction ↔ Visual context linking

This is the stage where data preprocessing, normalization, synchronization, and labeling become more crucial. Proper alignment ensures that every modalities describe the same event, intent, or object, which is what enables contextual understanding instead of fragmented perception.

Step 4: Implement Fusion Intelligence

Once the data alignment passes its way, the system architecture must support multimodal learning. This possibly involves designing how exactly the inputs are encoded, transformed into embeddings, and combined into a unified representation.

Here, you define how the modalities talk to each other:

- Feature fusion (early fusion)

- Representation fusion (mid fusion)

- Decision fusion (late fusion)

This fusion layer becomes the core of the AI agent, which allows it to interpret complex real-world situations instead of processing isolated signals.

Step 5: Train with Multimodal Learning Strategies

Training a multimodal AI agent actually requires more than just the basic training. Instead, here’s how you use layered learning approaches:

- Pre-training on large multimodal datasets

- Individual learning for cross-modal alignment

- Fine-tuning on the domain-specific data

- Use transfer learning to reduce AI agent development costs by adapting pre-trained models

- Self-supervised learning for effective scaling

This phase creates a deep-modal understanding rather than surface-level integration

Step 6: Embed Agentic Decision Logic

This is the step where the system becomes an AI agent instead of being just a modal. Decision-making layers are introduced, including:

- Memory modules

- Goal planning systems

- Context tracking

- Reinforcement learning

- Rule-based safety layers

- Policy engines

This step actually enables the following:

- Autonomous decisions

- Adaptive behaviour

- Multi-step reasoning

- Environment -aware actions

Step 7: Build Action & Interface Layer

Now, the multimodal AI agent has the capabilities to act. This step focuses on integrating the following:

- APIs

- Dashboards

- System triggers

- Workflow automation

- Human feedback loops

- Robotics interfaces

Whether it triggers the workflow, interacts with users, controls systems, or supports operations, this layer converts intelligence into real-world impact.

Step 8: Deploy, Monitor & Evolve

Deployment is actually not the final stage that is involved in building a multimodal AI agent. It’s more like the starting point of the agent’s lifecycle. Post-monitoring involves:

- Real-time monitoring

- Drift detection

- Performance evaluation

- Feedback learning loops

- Continuous retraining

- Security and compliance layers

The multimodal AI agents must evolve with the changing environments, user behaviour, and data patterns to stay effective and relevant.

In the meantime, if you are looking to speed up the development process, partner with an AI agent development company to get custom solutions that align with your business needs.

Multimodal Dataset Quality Checklist

Before you train anything, your dataset must definitely pass the sanity tests below:

✅ Coverage: Different scenarios, environments, lighting, behaviours, accents, and contexts.

✅ Bias Control: Avoid skewed demographic, geographic, and behavioral data.

✅ Low Noise: Broken intelligence will cause misaligned captions, wrong labels, and corrupted audio.

✅ Consistency: The same object and event mean the same thing across the modalities.

✅ Balance: All modalities should be fairly represented (it means not 90% text and 10% images).

✅ Real-World Variance: Not just perfect studio data, but messy real-world data too.

Top Platforms to Consider for Multimodal AI Agent Development

Whether you're a developer or company owner, the following are the top AI agent platforms and tools you should look into for building multimodal AI agents:

OpenAI - CLIP & GPT-4o

Enables advanced multimodal reasoning across images, text, and audio. This makes it ideal for building AI agents that understand and respond in real time.

Meta AI - ImageBind

It provides a unified cross-modal embedding. This allows AI agents to connect multiple sensory inputs without requiring paired training data.

Google DeepMind - Flamingo

It supports few-shot vision-language learning, which enables AI agents to interpret visual context and generate precise responses.

HuggingFace - Offers a flexible and open-source ecosystem with pretrained multimodal models and datasets. This is used for rapid prototyping and custom AI agent development.

Rasa & LangChain

It enables developers to orchestrate conversational AI with external tools, memory, and multimodal capabilities for building end-to-end intelligent agent systems.

Moreover, developers can also consider choosing open-source tools like HuggingFace Transformers for flexibility and fast prototyping.

Cost of Building & Implementing Multimodal AI Agents

So, how much does multimodal AI software development actually cost? This section answers that. Just like understanding the technical considerations, getting to know the cost of implementation is vital.

The factors that influence the cost of multimodal agent development include:

- Complexity of the modalities

- Custom vs. off-the-shelf

- Data collection & labeling

- Development platform & tools

- Integration with existing systems

Approximate Cost Estimates:

| Stage | Scope | Typical Cost |

|---|---|---|

| Prototype | Basic multimodal concept, 1-2 modalities, and a small dataset | $10k - $50k |

| Pilot | Real users, multiple modalities, and initial real-world testing | $50k - $200k |

| Production | Full-scale deployment, real-time processing, and enterprise-ready | $200k – $1M+ |

Hidden Costs to Watch:

- Data labeling and preprocessing

- Evaluation and testing

- Real-time infrastructure

- Maintenance and retraining

If you think the development is going over budget, here are some possible ways to save costs:

- Start small with a pilot before scaling up.

- Choose open-source AI agent tools.

- Partner with an AI development company to obtain modular or reusable frameworks.

- Minimize upfront costs by combining single-modal AI with multimodal AI capabilities to create hybrid agents.

Challenges Associated with Building Multimodal AI Agents

Despite the potential advantages, building multimodal AI agents does come with its own challenges. These include, but are not limited to:

- Aligning different data types is not just complex but also time-consuming.

- These models require larger datasets and more computing power.

- Since it involves using different modalities, contradictory signals can occur.

- Real-time processing across inputs can eventually slow down performance.

- It becomes difficult to understand the agent’s decisions with more data layers.

Overcoming these challenges is critical and requires solid data pipelines, robust models, and an experienced development team like Sparkout.

Why Choose Sparkout Tech as Your AI Agent Development Company

Whether you're starting to integrate AI from scratch or have a vision and want to bring it to life, Sparkout Tech is the trusted AI agent development company you need to reach. We don’t just build AI agents. Instead, we architect intelligent ecosystems that drive measurable business impact.

Deep Agentic Expertise

The expertise team at Sparkout specializes in designing autonomous AI agents that are capable of reasoning, planning, decision-making, and contextual execution across complex workflows.

Expertise-Grade Infrastructure

This firm leverages robust and scalable AI development frameworks and platforms to ensure performance, reliability and seamless system integration.

Advanced Capabilities

From text and vision to audio and structured enterprise data, Sparkout builds multimodal AI agents that fuse diverse data streams into intelligent and real-time actions.

Cloud-Native Deployment

Business-focused AI agents built by Sparkout assist modern infrastructure and enable secure deployment across cloud-native, hybrid, and distributed environments with flawless scalability.

Long-Term Support

Each and every solution developed by Sparkout is tailored to your industry, workflows, and compliance needs. Beyond deployment, we provide continuous optimization, monitoring, and evolution to keep the AI agents future-ready.

The Future of Multimodal AI Agents

Multimodal AI is transforming towards even more powerful applications. Here are the future trends in multimodal AI that lie ahead:

- Robots and devices will understand their environment through vision, sound, and movement.

- Simulations powered by real-time multimodal data for manufacturing, healthcare, etc.

- AR/VR integration that offers smarter, more responsive virtual experiences using multimodal cues.

- The rise of Emotional AI, enabling systems to detect emotions from facial expressions, language, and voice.

- Unified foundation models like GPT-4o that allow agents to reason across any modality.

Conclusion

Autonomous agentic ecosystems are the future of multimodal AI agents as they can interact with environments, people, and other agents. Hence, we can expect them to navigate physical spaces, communicate naturally, and make real-time decisions.

As businesses and developers look forward to innovating, investing in multimodal AI solutions will be a strategic advantage in thriving in a dynamic environment. The integration of multi-modal AI frameworks with agentic AI development principles will bring about general-purpose AI agents with real-world impacts.

Ready to Build AI that Thinks Like Humans?

Partner up with Sparkour Tech today and start your multimodal AI journey with expert guidance